Comparisons

Best Local LLMs for Home Automation 2026

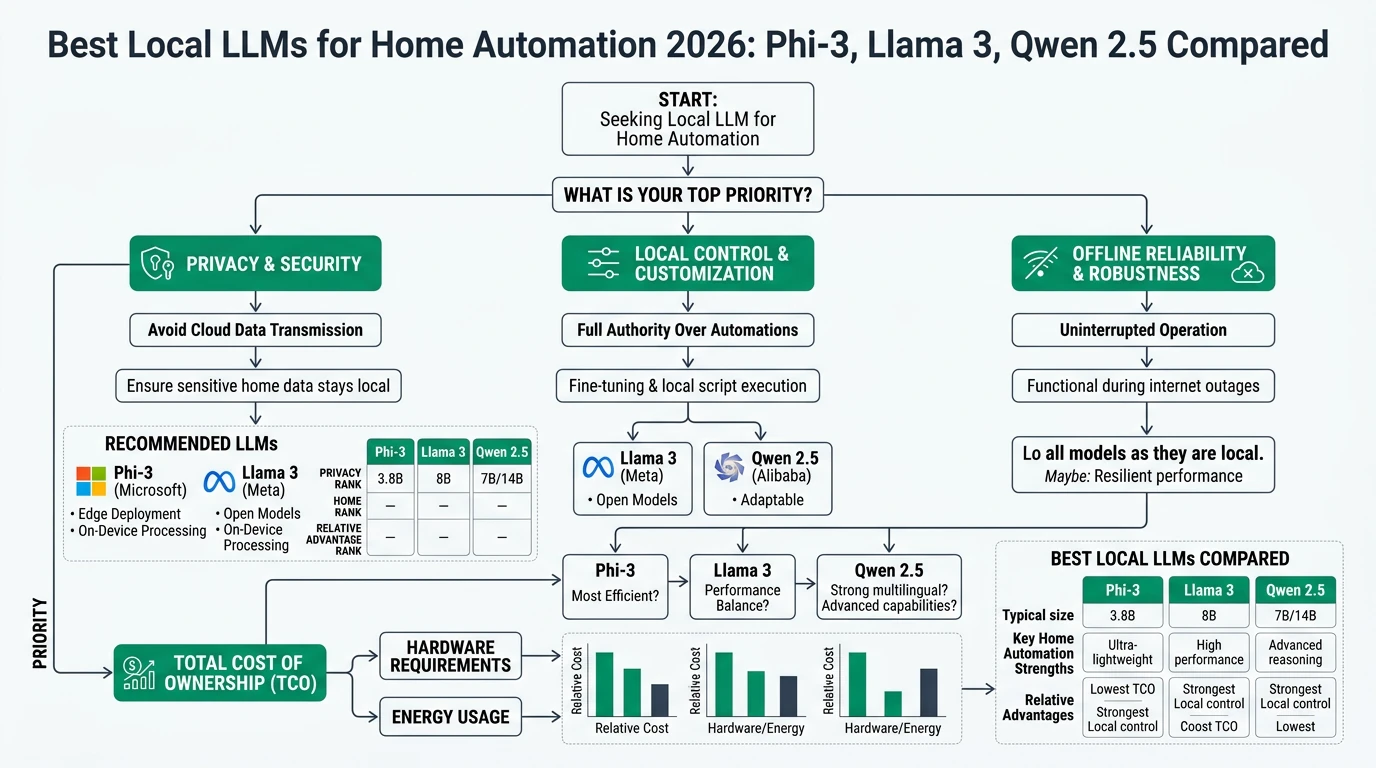

Explore the best local LLMs for home automation in 2026, focusing on privacy, offline reliability, and cost-effectiveness.

Quick answer: Which local LLM is best for home automation in 2026?

Phi-3 is the top choice for privacy-focused, offline home automation, offering excellent performance on low-cost hardware like the Raspberry Pi 5.

Executive Summary

In 2026, the landscape of local large language models (LLMs) for home automation is defined by a focus on privacy, offline reliability, and cost-effectiveness. This guide compares three leading models: Phi-3, Llama 3, and Qwen 2.5. Each model offers unique strengths, but Phi-3 stands out for its ability to run efficiently on low-cost hardware like the Raspberry Pi 5, making it ideal for privacy-first home automation setups. Llama 3 and Qwen 2.5, while strong contenders, lack specific benchmarks for such environments but offer robust ecosystems and community support.

Bottom line: Phi-3 is the preferred choice for those prioritizing privacy and cost-effective offline home automation in 2026.

Privacy and Local Control

Privacy is a paramount concern for users seeking local LLMs for home automation. The ability to run models offline, without the need for cloud-based services, ensures that sensitive data remains within the user’s control. Phi-3 excels in this regard, offering a fully offline inference capability that eliminates the need for API keys or cloud connections1. This makes it particularly suitable for environments where data privacy is non-negotiable.

Llama 3, developed by Meta, also provides strong privacy features through its open-source nature, allowing users to audit and modify the model as needed2. However, the lack of specific benchmarks for home automation on devices like the Raspberry Pi 5 means that its privacy assurances are more theoretical than practical in this context. Qwen 2.5, while assumed to have similar open weights, lacks detailed data on its offline capabilities, making it a less certain choice for privacy-focused users.

The importance of local control cannot be overstated. With Phi-3, users can deploy the model on a Raspberry Pi 5, achieving zero cloud dependencies and ensuring that all data processing occurs locally1. This setup not only enhances privacy but also reduces the risk of data breaches associated with cloud-based services. In contrast, while Llama 3 and Qwen 2.5 offer open-source flexibility, their lack of specific guidance for low-power devices like the Raspberry Pi 5 limits their appeal for users seeking complete local control.

Offline Reliability

Offline reliability is crucial for ensuring that home automation systems remain functional even without internet access. Phi-3 demonstrates impressive offline performance, requiring only 2.2 GB of RAM and achieving a token processing speed of 2.8 tokens per second on a Raspberry Pi 51. This makes it a highly efficient choice for users looking to maintain robust automation capabilities without relying on cloud services.

Llama 3, while generally reliable on CPUs, typically requires 4-8 GB of VRAM, which may not be feasible for all users, especially those looking to deploy on low-cost hardware2. Its lack of specific benchmarks for devices like the Raspberry Pi 5 further complicates its suitability for offline home automation. Qwen 2.5, similarly, lacks detailed performance metrics, though lightweight variants are inferred to exist based on its ecosystem.

The ability to operate reliably offline is a significant advantage for Phi-3, particularly in scenarios where internet connectivity is unstable or unavailable. By maintaining functionality without external dependencies, users can ensure that their home automation systems continue to operate seamlessly, providing peace of mind and uninterrupted service.

Total Cost of Ownership

The total cost of ownership (TCO) is a critical consideration for users deploying local LLMs for home automation. Phi-3 offers a compelling value proposition, with a setup cost of less than $100 for a Raspberry Pi 5 and minimal power requirements12. This makes it an attractive option for budget-conscious users seeking to implement advanced automation features without significant financial investment.

Llama 3, while offering a robust ecosystem and strong community support, typically requires entry-level GPUs, which can increase the initial setup cost to around $200 or more2. Qwen 2.5, while not explicitly quantified, is assumed to have similar hardware requirements, potentially making it a less cost-effective choice for users prioritizing affordability.

Hidden costs, such as electricity consumption and fine-tuning compute resources, also play a role in determining the overall TCO. Phi-3’s low power draw and the ability to fine-tune on a standard laptop without incurring additional cloud costs further enhance its appeal as a cost-effective solution for home automation12. In contrast, the higher hardware requirements of Llama 3 and Qwen 2.5 may lead to increased operational expenses over time.

Key Considerations for Choosing a Local LLM

- Assess privacy needs and ensure offline capability.

- Evaluate hardware requirements and compatibility.

- Consider total cost of ownership, including hidden costs.

- Examine community support and ecosystem robustness.

- Verify model performance on desired hardware platforms.

Product Ecosystem and Support

The ecosystem surrounding a local LLM can significantly impact its usability and support options. Phi-3 benefits from being part of the Microsoft family, with robust support through platforms like Ollama, Hugging Face, and Azure34. This extensive ecosystem provides users with a wealth of resources for deployment, fine-tuning, and troubleshooting, making it a highly accessible option for non-expert users.

Llama 3, as an open-source model from Meta, enjoys strong community support and a vibrant ecosystem that facilitates fine-tuning and customization2. However, the lack of specific guidance for home automation on low-power devices like the Raspberry Pi 5 may limit its appeal for users seeking straightforward deployment options. Qwen 2.5, while part of Alibaba’s offerings, lacks detailed ecosystem notes specific to home automation, making it a less certain choice for users prioritizing comprehensive support.

The availability of support resources and community engagement can greatly influence the ease of use and long-term viability of a local LLM. Phi-3’s integration with established platforms and its validation for home automation scenarios make it a standout choice for users seeking a well-supported and reliable solution134.

Security and Privacy Implications

Security and privacy are paramount when deploying local LLMs for home automation. Phi-3’s ability to run entirely offline on a Raspberry Pi 5 ensures that sensitive data remains secure and under the user’s control1. This setup minimizes the risk of data breaches and unauthorized access, providing a robust privacy-first solution for home automation.

Llama 3, with its open-source nature, offers the potential for thorough security audits and customization2. However, the lack of specific benchmarks for low-power devices like the Raspberry Pi 5 means that its security assurances are more theoretical in this context. Qwen 2.5, while assumed to have similar open weights, lacks detailed data on its security features, making it a less certain choice for privacy-focused users.

The importance of vetting fine-tuning datasets cannot be overstated. Phi-3 employs synthetic and filtered data to ensure that fine-tuning does not introduce vulnerabilities1. Users must remain vigilant in verifying the integrity of datasets used for customization to maintain the security and privacy of their home automation systems.

Setup Complexity and Support Burden

The complexity of setting up a local LLM for home automation can significantly impact its accessibility for non-expert users. Phi-3 offers a low-complexity setup, with deployment on a Raspberry Pi 5 requiring minimal hardware and software configuration1. This simplicity, combined with comprehensive support from platforms like Ollama and Hugging Face, makes it an ideal choice for users seeking a straightforward and hassle-free deployment experience.

Llama 3 and Qwen 2.5, while offering robust ecosystems, typically require more complex setups due to higher hardware requirements and the lack of specific guidance for low-power devices2. This increased complexity may pose a barrier for users without technical expertise, potentially leading to a higher support burden over time.

The ability to fine-tune models on standard laptops without incurring additional cloud costs further enhances the appeal of Phi-3 as a user-friendly solution12. By minimizing setup complexity and support requirements, users can focus on leveraging the capabilities of their local LLMs for home automation without being bogged down by technical challenges.

Price Model and Hidden Costs

The price model and hidden costs associated with deploying local LLMs for home automation are critical considerations for budget-conscious users. Phi-3 offers a highly cost-effective solution, with a total setup cost of around $150, including hardware and peripherals12. Its low power consumption and the ability to fine-tune on a standard laptop without incurring additional cloud costs further enhance its appeal as a budget-friendly option.

Llama 3, while offering a robust ecosystem and strong community support, typically requires entry-level GPUs, which can increase the initial setup cost to around $250 or more2. Qwen 2.5, while not explicitly quantified, is assumed to have similar hardware requirements, potentially making it a less cost-effective choice for users prioritizing affordability.

Hidden costs, such as electricity consumption and fine-tuning compute resources, also play a role in determining the overall TCO. Phi-3’s low power draw and the ability to fine-tune on a standard laptop without incurring additional cloud costs further enhance its appeal as a cost-effective solution for home automation12. In contrast, the higher hardware requirements of Llama 3 and Qwen 2.5 may lead to increased operational expenses over time.

FAQ

Frequently Asked Questions

What makes Phi-3 the best choice for home automation?

Phi-3 offers excellent privacy and offline reliability, running efficiently on low-cost hardware like the Raspberry Pi 5, making it ideal for privacy-first home automation setups.

Can Llama 3 be used for home automation?

While Llama 3 offers strong community support and an open-source ecosystem, it lacks specific benchmarks for low-power devices like the Raspberry Pi 5, making it less ideal for offline home automation.

Is Qwen 2.5 suitable for privacy-focused users?

Qwen 2.5 is assumed to have similar open weights to other models, but lacks detailed data on its offline capabilities and privacy features, making it a less certain choice for privacy-focused users.

What are the hidden costs of deploying local LLMs?

Hidden costs include electricity consumption and fine-tuning compute resources. Phi-3’s low power draw and ability to fine-tune on a standard laptop help minimize these costs.

How does the ecosystem impact the usability of local LLMs?

A robust ecosystem provides users with resources for deployment, fine-tuning, and troubleshooting, enhancing the usability and long-term viability of local LLMs.

Primary Sources Table

| Index | Title/Description | Direct URL |

|---|---|---|

| [1] | How To Run A Lightweight LLM Like Phi-3 On A Raspberry Pi 5 For Privacy-First Home Automation | https://www.alibaba.com/product-insights/how-to-run-a-lightweight-llm-like-phi-3-on-a-raspberry-pi-5-for-privacy-first-home-automation.html |

| [2] | Phi-3 Tutorial: Hands-On With Microsoft’s Smallest AI Model | https://www.datacamp.com/tutorial/phi-3-tutorial |

| [3] | Best Lightweight LLMs in 2026: Speed, Efficiency and Innovation | https://tech-now.io/en/blogs/best-lightweight-llms-in-2026-speed-efficiency-and-innovation |

| [4] | Tiny but mighty: The Phi-3 small language models with big potential | https://news.microsoft.com/source/features/ai/the-phi-3-small-language-models-with-big-potential/ |

| [5] | Phi-3 Technical Report (arXiv PDF) | https://arxiv.org/pdf/2404.14219 |

| [6] | Phi Open Models - Small Language Models (Azure) | https://azure.microsoft.com/en-us/products/phi |

Conclusion

In conclusion, the choice of a local LLM for home automation in 2026 hinges on factors such as privacy, offline reliability, and total cost of ownership. Phi-3 emerges as the top choice for users prioritizing privacy and cost-effectiveness, offering excellent performance on low-cost hardware like the Raspberry Pi 5. While Llama 3 and Qwen 2.5 offer robust ecosystems and community support, their lack of specific benchmarks for low-power devices limits their appeal for privacy-focused users.

For further insights into privacy-focused home automation solutions, explore our guides on Apple HomeKit Secure Video vs Local NVR for Privacy, Best Hardware for Local AI Smart Home 2026, and Best Local Storage Security Cameras Without Subscription 2026.

Footnotes

-

How To Run A Lightweight LLM Like Phi-3 On A Raspberry Pi 5 For Privacy-First Home Automation ↩ ↩2 ↩3 ↩4 ↩5 ↩6 ↩7 ↩8 ↩9 ↩10 ↩11 ↩12

-

Best Lightweight LLMs in 2026: Speed, Efficiency and Innovation ↩ ↩2 ↩3 ↩4 ↩5 ↩6 ↩7 ↩8 ↩9 ↩10 ↩11 ↩12

-

Tiny but mighty: The Phi-3 small language models with big potential ↩ ↩2